Exploring Tools Used in Data Science:The Power of Data Science

Some common tools used in data science include Python, R, SQL, Jupyter Notebook, Tableau, TensorFlow, and scikit-learn.

Data science is a multidisciplinary field that relies on a range of tools to extract insights and value from data. These tools serve as the backbone of data science workflows, enabling tasks such as data manipulation, analysis, modeling, visualization, and deployment. From programming languages like Python and R to specialized platforms, cloud services, and visualization tools, the arsenal of tools used in data science empowers data scientists to tackle complex problems and unlock the potential of data. In this blog, we will explore the essential tools used in data science and their significance in driving data-driven decision-making and innovation.In the field of data science, several tools are commonly used to facilitate the collection, storage, analysis, and visualization of data. These tools help data scientists and practitioners in their data-centric tasks. Here is a brief explanation of some key tools used in data science, incorporating the provided keywords.

Python

Python is a popular programming language used extensively in data science due to its simplicity, versatility, and rich ecosystem of libraries like Pandas, NumPy, and scikit-learn. It is often the language of choice for data manipulation, analysis, and machine learning tasks.Python is a versatile and widely used programming language in the field of data science. Here are some key points explaining the significance of Python in data science

-

Simplicity: Python has a clean and intuitive syntax, making it easy to read and write code, especially for beginners.

-

Extensive Libraries: Python offers a rich ecosystem of libraries and frameworks that are specifically designed for data science tasks. Popular libraries include Pandas for data manipulation, NumPy for numerical computations, and scikit-learn for machine learning.

-

Data Manipulation: Python's Pandas library provides powerful data manipulation tools, enabling efficient handling, cleaning, and transformation of datasets.

-

Machine Learning Capabilities: Python is widely used for building and deploying machine learning models. Libraries like scikit-learn, TensorFlow, and PyTorch offer comprehensive tools and algorithms for training and evaluating machine learning models.

-

Integration: Python seamlessly integrates with other languages, tools, and frameworks, allowing data scientists to leverage existing code and collaborate with teams using different technologies.

-

Data Visualization: Python offers multiple libraries for creating visualizations, such as Matplotlib, Seaborn, and Plotly. These libraries enable the creation of informative and visually appealing charts, graphs, and interactive plots.

-

Community Support: Python has a vibrant and supportive community of data scientists and developers. There are abundant online resources, forums, and tutorials available to assist in learning and problem-solving.

-

Deployment Flexibility: Python allows for easy deployment of data science models in various environments, including web applications, cloud platforms, and edge devices.

-

Jupyter Notebooks: Python integrates seamlessly with Jupyter Notebooks, providing an interactive and collaborative environment for data exploration, analysis, and documentation.

R:

R is another programming language widely used in data science, specifically for statistical analysis and data visualization. It offers a comprehensive suite of packages, including dplyr, ggplot2, and caret, that support various data science tasks.

-

R is a programming language specifically designed for statistical computing and data analysis.

-

It provides a wide range of packages and libraries that support various data science tasks, such as data manipulation, exploratory data analysis, and statistical modeling.

-

R is particularly popular for its capabilities in data visualization, with packages like ggplot2 offering advanced and customizable plotting functionalities.

-

It offers extensive statistical functions and algorithms, making it suitable for advanced statistical analysis and modeling.

-

R has a strong and active community of data scientists and statisticians, providing a rich ecosystem of resources, tutorials, and support.

-

With R, you can easily read and manipulate data from different file formats and databases.

-

R integrates well with other programming languages and tools, allowing seamless integration into larger data science workflows.

-

The flexibility and power of R make it suitable for both exploratory data analysis and the development of complex data science models and applications.

-

R is an open-source language, which means it is free to use and continually developed and improved by a global community of contributors.

SQL and Databases

Structured Query Language (SQL) is used for managing and querying large datasets stored in relational databases. It enables data scientists to efficiently extract, filter, and manipulate data, making it an essential tool for working with structured data.

-

SQL (Structured Query Language) is a programming language used for managing and manipulating structured data in relational databases.

-

It provides a set of commands for creating, querying, updating, and deleting data in a database.

-

SQL allows data scientists to extract relevant information from large datasets using queries and filtering conditions.

-

It supports various operations such as joining tables, aggregating data, sorting, and grouping data.

-

SQL is widely used in data science for data exploration, data cleaning, data transformation, and preparing data for analysis.

-

Understanding SQL is important for data scientists as it enables efficient data retrieval and manipulation tasks, essential for working with structured data in databases.

Hadoop and Spark

These distributed processing frameworks are crucial for handling big data. Hadoop allows for distributed storage and processing, while Spark provides a faster and more flexible data processing engine, enabling large-scale data analysis and machine learning tasks.

Hadoop

-

Hadoop is a distributed processing framework designed for storing and processing large volumes of structured and unstructured data.

-

It provides a scalable and fault-tolerant storage system called the Hadoop Distributed File System (HDFS) to store data across multiple servers.

-

Hadoop allows for distributed data processing using a programming model called MapReduce, which breaks down tasks into smaller sub-tasks that can be processed in parallel.

-

It is commonly used in big data applications to handle large datasets and perform batch processing tasks.

Spark

-

Spark is an open-source, distributed data processing and analytics framework designed for speed, ease of use, and advanced analytics.

-

It provides an in-memory computing engine, which allows for faster data processing compared to traditional disk-based systems.

-

Spark supports a wide range of data processing tasks, including batch processing, real-time streaming, machine learning, and graph processing.

-

It offers a high-level programming interface in multiple languages, such as Scala, Python, and Java, making it accessible to a broader audience.

-

Spark is known for its ability to handle large-scale data processing, iterative algorithms, and complex data analysis tasks efficiently.

Jupyter Notebooks

Jupyter Notebooks is a popular tool in the field of data science that provides an interactive environment for data exploration, analysis, and documentation. It supports multiple programming languages such as Python, R, and Julia. Jupyter Notebooks combine code, visualizations, and explanatory text in a single document, allowing data scientists to write, execute, and visualize code snippets step by step. Its versatility and interactivity make it ideal for prototyping, experimenting, and sharing data science workflows with others. With Jupyter Notebooks, data scientists can document their thought process, collaborate with team members, and create reproducible analyses that foster transparency and facilitate knowledge sharing in the data science community.

Tableau and Power BI

These powerful visualization tools allow data scientists to create interactive and visually appealing dashboards and reports. They enable data exploration, storytelling, and the communication of insights to stakeholders in a user-friendly manner.

Tableau

Tableau is a powerful and user-friendly data visualization tool known for its drag-and-drop interface and interactive capabilities. It allows data scientists to create visually stunning dashboards, reports, and charts, making it easier to explore and communicate complex data patterns. With Tableau, users can connect to various data sources, perform data blending and aggregation, and create interactive visualizations that facilitate data exploration and storytelling. It offers advanced features like data mapping, parameter controls, and embedded analytics. Tableau's intuitive interface and robust features make it a go-to tool for data scientists aiming to present their findings in a visually appealing and impactful manner.

Power BI

Power BI is a business intelligence and data visualization tool developed by Microsoft. It enables data scientists to connect to a wide range of data sources, including databases, cloud services, and online APIs. Power BI offers an intuitive interface and a comprehensive set of visualization options, allowing users to create interactive dashboards, reports, and visualizations. With its data modeling capabilities, Power BI supports data transformation, shaping, and modeling to prepare the data for analysis. It also provides features for data exploration, filtering, and drill-down capabilities. Power BI integrates well with other Microsoft tools, making it a popular choice for organizations already using the Microsoft ecosystem.

TensorFlow and PyTorch

These deep learning frameworks are widely used for building and training neural networks. TensorFlow and PyTorch provide a high-level interface for implementing complex deep learning models and conducting cutting-edge research in fields like computer vision, natural language processing, and reinforcement learning.

-

TensorFlow: Developed by Google, TensorFlow is an open-source library widely used for machine learning and deep learning tasks. Its flexible architecture allows users to build and train a wide variety of models, ranging from simple neural networks to complex deep learning architectures. TensorFlow offers a high-level API, Keras, which simplifies the process of building and training neural networks. It provides excellent support for distributed computing, enabling efficient utilization of resources across multiple devices or machines.

-

PyTorch: Created by Facebook's AI Research lab, PyTorch is another popular open-source deep learning framework. PyTorch focuses on providing a dynamic and intuitive approach to building neural networks, making it easy to prototype and experiment with models. It offers a tape-based autograd system that simplifies the process of defining and computing gradients, facilitating efficient model training. PyTorch is highly regarded for its flexibility and user-friendly interface.

Data Science Platforms and Cloud Services

Various data science platforms and cloud services, such as Azure Machine Learning, Google Cloud Platform, and Amazon Web Services, provide integrated environments for data processing, model development, deployment, and scalable computing resources.

Data science platforms and cloud services play a significant role in enabling efficient and scalable data analysis and machine learning workflows. These platforms provide a range of tools and services that facilitate data management, model development, collaboration, and deployment. Let's explore the importance of data science platforms and cloud services in the field of data science.

-

Data Management: Efficient data ingestion, storage, and processing.

-

Collaboration and Version Control: Facilitate teamwork, code sharing, and version control.

-

Model Development and Experimentation: Simplify model development with integrated environments and libraries.

-

Scalable Computing Power: Access to scalable computing resources for handling large datasets and complex computations.

-

Model Deployment and Serving: Streamline model deployment in production environments.

-

Automated Machine Learning (AutoML): Automate tasks like feature engineering and model selection.

-

Security and Compliance: Ensure data security and compliance with industry standards and regulations.

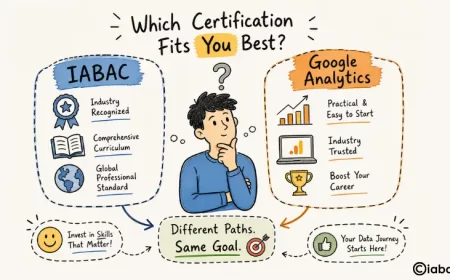

By leveraging these tools, data scientists can effectively tackle complex data challenges, extract insights, and derive meaningful solutions. Understanding and utilizing these tools is often an integral part of data science courses, certifications, and training programs, which equip individuals with the skills needed for a successful career in the rapidly growing field of data science.

The tools used in data science play a crucial role in enabling efficient data analysis, modeling, visualization, collaboration, and deployment. Python and R serve as powerful programming languages, while SQL enables effective data querying and management. Jupyter Notebooks provide an interactive environment for code development and documentation, and Tableau facilitates data visualization. Additionally, data science platforms and cloud services offer comprehensive solutions for data management, collaboration, scalability, and model deployment. By utilizing these tools effectively, data scientists can harness the power of data to derive insights, make informed decisions, and drive impactful outcomes in various industries.